Note: this is part of an ongoing series about a little understood but critical issue for local governments in the intelligence era: owning the institutional mind.

Local government administrators are connoisseurs of professional pain. They live with the fifteen-year-old permitting system that everyone despises but no one dares replace. They navigate the GIS platform that increases in price annually while adding features that sound impressive, but aren’t. They manage the tax system that “only one vendor really knows how to support,” usually described with the same tone one reserves for haunted houses.

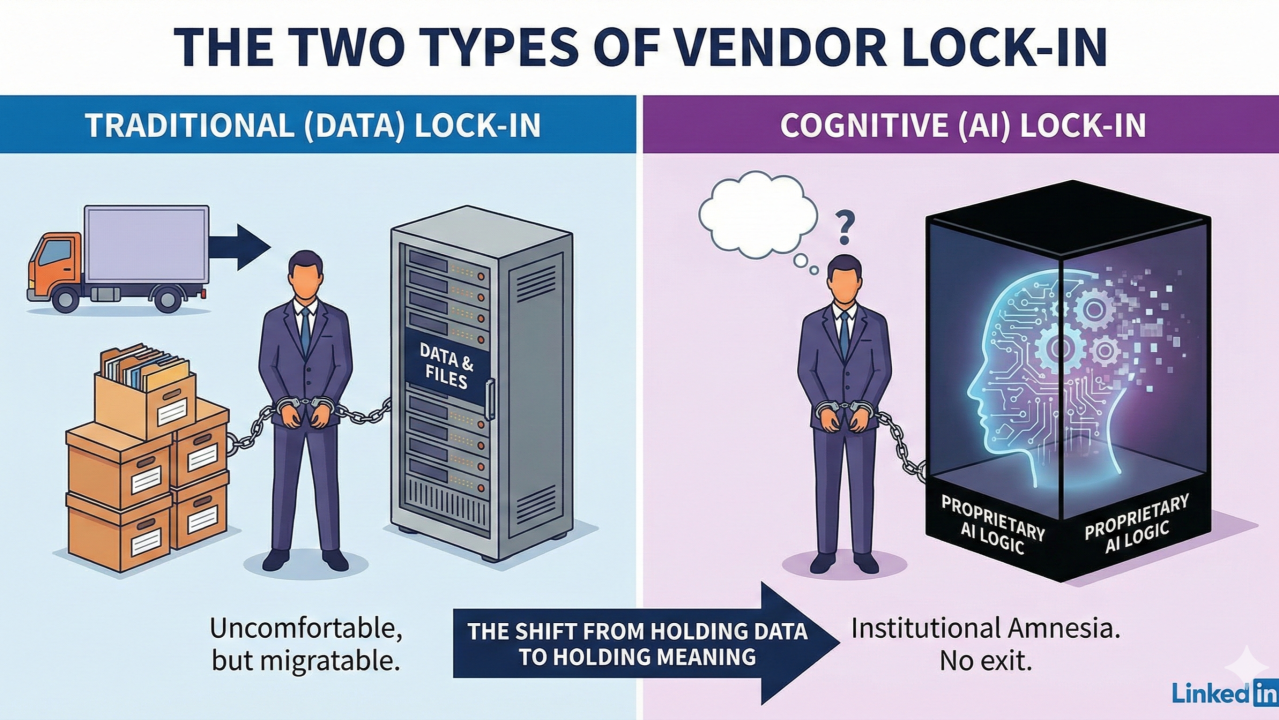

This is the familiar misery of vendor lock-in. It is uncomfortable, expensive, and quietly demoralizing. Yet it is also, in a perverse way, survivable. Even inside the most stubborn legacy system, the county still owns the ordinances. You still own the tax rolls. You still understand how your rules work, even when the software treats that understanding as optional. You can endure. You can complain in well-practiced ways. And if the pain becomes sufficiently motivating, you can migrate.

AI introduces a different species of lock-in altogether. It is a shift from losing access to your data to losing control over your meaning.

In the AI era, vendors are no longer just hosting your data. They are hosting your institution’s understanding of itself.

From Data Lock-In to Cognitive Lock-In

Traditional lock-in revolves around format. Cognitive lock-in revolves around interpretation.

When a vendor trains a black-box system on your ordinances, policies, and workflows, they are not filing documents neatly in a digital cabinet. They are encoding how your government thinks. They are mapping the “load-bearing beams” of your logic, the intentional ambiguities, the departmental hierarchies, and the unwritten exceptions that make the county run.

Leaving a traditional system resembles moving houses. It is disruptive, involves too many boxes, and you’ll likely break a few things, but you remain recognizably yourself at the end. Leaving a proprietary AI system looks more like a digital lobotomy. You get your PDFs back, but not the synaptic connections that made them usable.

In a traditional system, when a senior planner retires, the county loses a person but keeps its records. In a cognitively locked system, when a vendor exits, the county keeps its records but loses the ability to interpret them. Paper-based authority decays slowly; cognitive authority vanishes the moment the contract expires.

The Turnkey Trap

The most dangerous phrase in a local government RFP today is “turnkey AI solution.”

It sounds like relief, intelligence without overhead. But in systems thinking, there is no such thing as a free lunch. What this phrase actually signals is that the vendor will quietly assemble your Knowledge Layer on your behalf and retain exclusive custody of the keys.

This rarely unfolds dramatically. It’s a slow-boil:

- A chatbot appears on the website.

- Staff correct it when it hallucinates.

- The vendor adjusts the model using those corrections.

- Residents begin to rely on the output; appeals eventually cite it.

Every “that’s not how we do it here” offered by your staff becomes proprietary training data. Eventually, the machine’s explanation becomes the law as experienced. The vendor is no longer hosting software; they are hosting the county’s memory. And because that memory resides inside a proprietary black box, you cannot pack it up and take it with you.

FOIA, Due Process, and “The System Said…”

This is not merely an IT inconvenience. It is a structural failure of due process. Local government rests on a modest but stubborn premise: authority must be traceable. Decisions must be explainable using adopted law and documented policy.

Now imagine the future that arrives without fanfare: A resident prints an AI-generated answer about a zoning variance and attaches it to an appeal. The answer is wrong, but the resident relied on it. Staff cannot reproduce the response. The vendor explains that “the model state has changed.” The county cannot show which rule was applied, or why.

At that moment, the institution lacks an epistemic chain of custody. “The system said so” is not a legal standard.

Traditional systems fail operationally; AI systems fail epistemically. They fail in ways that cannot be inspected, reasoned through, or reconstructed in a courtroom. This is lock-in at the level of administrative law.

The Choice: Ownership or Default

Local governments often believe they are “waiting and seeing” before adopting AI. They are not. By declining to build a sovereign Knowledge Layer, they are pre-authorizing vendor-defined meaning. They are allowing their institutional memory to be assembled by default inside systems they do not control.

The Knowledge Layer functions as the operating system of modern government. Without ownership of that layer, interpretation becomes a rented service. You may still hold your data, but your institution’s mind lives elsewhere.

The question is not whether AI will be adopted. The real question is simpler and more uncomfortable: Who owns the machinery of understanding when it is?

You do not lose your data. You lose your institutional mind.

Author’s Note

Doing nothing is not a neutral stance. It is a decision to let the “municipal junk drawer” be organized by a third party who keeps the only set of keys. Better vendors are not the solution; ownership of institutional memory is.

In the final article of this series, we will explore how small and rural governments can take the first step toward that ownership, without becoming technology companies and without waiting for permission.