NOTE: This is the second article in this series.

Artificial intelligence did not introduce a new problem to local government. It simply made an old one impossible to ignore. Long before anyone embedded a chatbot on a county website, local governments operated with a powerful but informal layer of understanding that sat between data and real-world decisions.

Staff knew that what was written in the ordinance had to be cross-referenced with the layers on the GIS map. They knew that the definition of a “bedroom” in the assessing database might differ subtly from the definition in the zoning code. They remembered that the vague clause in Section 4 was vague on purpose, the result of a specific compromise during a contentious council meeting three years ago.

This layer rarely had a name. It lived in people, habits, and shared memory. AI did not invent this layer. AI exposes it. What follows is not an argument against AI. It is an argument for understanding what AI reveals about how our institutions have always worked.

The Category Error

Most conversations about AI in local government make a quiet but consequential mistake. They collapse distinct assets into one generic pile of “information.”

To understand what is happening, we must separate three things:

- The Model: the system doing the reasoning (e.g., ChatGPT). It provides the capability to process information, not the information itself.

- The Source Data: The disconnected artifacts. Not just ordinances and PDFs, but GIS layers, assessing databases, permit records, and decades of meeting minutes.

- The Meaning: The connective tissue that links those disconnected artifacts into a coherent decision.

A GIS polygon is not a decision. A property record is not context. A model does not “understand” a neighborhood simply because it ingested all the data points.

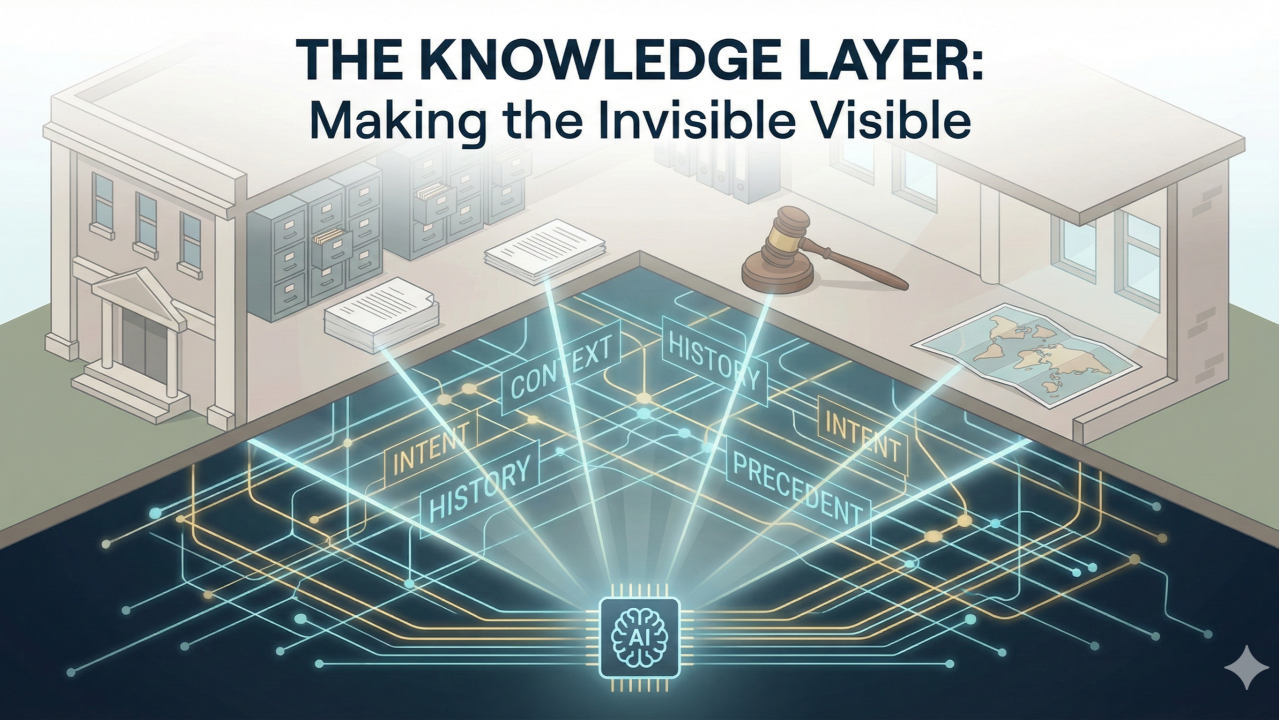

Something has always been required to bridge the gap between scattered data and actual governance. That something is what we are calling the Knowledge Layer.

The Knowledge Layer Was Always There

Local government has never operated on isolated data silos. If it had, you would need three different departments in the room just to answer a simple question about a driveway permit. Instead, synthesis happened socially. A planner wouldn’t just read the zoning code. They would mentally overlay the text onto their knowledge of the town map. They knew that a rule applied differently in the floodplain overlay than on Main Street. They remembered the intent of the council when that overlay was passed. They synthesized text (ordinance), space (GIS), and history (minutes) instantly.

This was not inefficiency. This was governance. Institutional knowledge accumulated through experience, precedent, and judgment. It persisted because it lived in people and was reinforced through daily practice. It was slow, human, and surprisingly durable. As long as interpretation flowed through people, the system worked because humans are naturally good at connecting dots.

What AI Changes

When AI enters this environment, it fundamentally alters how interpretation occurs. AI models are incredibly efficient at reading text or querying a database. However, they are structurally incapable of inferring unwritten relationships between those systems unless explicitly taught.

When residents and staff begin relying on automated explanations, the machine becomes the default interpreter. If the AI can read the ordinance but cannot “see” the GIS layer that modifies it, it will provide an answer that is factual but incorrect.

AI does not remove human authority, but it bypasses the human process of interpretation. When an automated answer is accepted without scrutiny, the machine has effectively made the decision, regardless of who signs the form. At that point, the synthesis of data stops behaving like a social process and starts behaving like infrastructure.

A Small Glitch With Large Implications

Consider a typical parks ordinance: “No organized athletic events allowed in the square after dusk without a permit.”

- The Human Layer: A park ranger sees a family throwing a baseball at 8:30 PM. Technically, this could qualify as an organized athletic event. Practically, the ranger uses discretion, knowing the spirit of the law was to prevent loud leagues, not families. The system functions as intended.

- The AI Layer, Without Context: A resident asks a city chatbot, “Can my kids play catch in the square after dinner?” The system reads the text literally. It sees “athletic event” and “after dusk.” It responds: “No. This activity is prohibited without a permit.”

The answer is textually accurate and institutionally wrong. No model, no matter how advanced, can infer discretion that has never been made explicit. That missing context is the Knowledge Layer.

What the Knowledge Layer Actually Does

If interpretation is now infrastructure, we need to be clear about the scope of that infrastructure. It is not just about defining terms in a PDF.

The Knowledge Layer is the operating system that connects your isolated data silos. Its role is not to make legal determinations, but to explain how official information is structured, applied, and routed within the institution. It handles the critical connections that previously only existed in staff brains:

- Connecting Text to Space (GIS): It is knowing that the word “wetland” in an ordinance is not an abstract concept, but corresponds to specific polygons on a GIS layer that trigger a different set of rules.

- Connecting Structure to Intent (Minutes): It is linking a sterile ordinance clause back to the fifteen-minute debate in the 2022 meeting minutes that explains why the clause exists and how it was intended to be applied.

- Connecting Silos (Assessing/Zoning): It is understanding that how the Assessor defines a “finished basement” in the database impacts a zoning question about Accessory Dwelling Units.

In human systems, these connections were made intuitively. In AI-enabled systems, these connections must be engineered.

Why Naming This Matters

Implicit knowledge works as long as humans are the primary engine of synthesis. AI changes that engine. When interpretation is automated, implicit knowledge does not disappear. It is reconstructed somewhere else, usually invisibly, inside the system delivering the answer. If the institution does not define the Knowledge Layer explicitly, it will still exist, because decisions still must be made. It will simply be defined by someone else’s algorithm.

Local governments are accustomed to governing what they can see. Budgets are explicit. GIS layers are visible. The Knowledge Layer, the synthesis of it all, has remained largely invisible because people carried it. By naming it, we are identifying the place where authority is already shifting. What cannot be named cannot be governed. What cannot be governed will be outsourced by default. Once named, however, it becomes governable.

Which leads naturally to the next question: if the Knowledge Layer is real, and if it now behaves like critical infrastructure, what does it mean to own it? And what the heck do we do with it?

Author’s Note

Readers with a background in AI, data engineering, or knowledge systems will recognize that nothing in this article is conceptually new to the technology world. What we are calling the Knowledge Layer is more commonly discussed using technical terms such as semantics, knowledge graphs, vector representations, embeddings, and retrieval pipelines.

Those ideas are well established in AI practice. What has been largely absent is their translation into the institutional language of local government.

For this article, I intentionally avoided technical terminology. Not to simplify the ideas, but to express them in a form that administrators, clerks, planners, and elected officials can recognize from their daily work. Governance decisions are made in this register, not in system diagrams.

Before something can be governed, it must first be understood by the people responsible for it.

The next article picks up where this one leaves off: if the Knowledge Layer is real, already shaping outcomes, and increasingly embedded in AI systems, what does it actually mean for a local government to own it, and what does ownership require in practice?