Artificial intelligence is entering local government the way most consequential things do: politely, without knocking, and claiming it will save everyone a little time.

It appears as a chatbot on the city website. A tool that summarizes ordinances. A quiet helper that drafts responses faster than a harried analyst between meetings. The conversation usually begins, and often ends, with efficiency. How can we do more with less, preferably before the budget workshop starts?

This framing is comforting. It is also incomplete.

This article is about a new form of infrastructure that local governments are quietly outsourcing, often without realizing it exists.

AI is not merely accelerating tasks. It is steadily becoming the lens through which laws, policies, and institutional knowledge are interpreted. Once that happens, a more unsettling question drifts into view:

Who controls the bridge between your official documents and the answers the machine provides?

This turns out to be less about software and more about infrastructure. And at present, much of that infrastructure is being built on land the public sector does not own.

From Documents to Interpretation

For most of modern local government, authority has lived in two dependable places, neither of which could be accused of glamour.

First, the artifacts. Ordinances, resolutions, policy manuals, and the binder that everyone knows exists but no one has opened since 2009, partly out of respect and partly out of fear.

Second, the people. Staff who remember why a rule was written, how it has been bent, and which clauses are treated as sacred despite never having been tested in court or daylight.

Interpretation happened slowly and socially. It moved through hallway conversations, counter visits, and emails that began with “Per our discussion…” Institutional memory was inefficient, occasionally exasperating, and remarkably resilient. It survived retirements, reorganizations, and at least three software migrations.

AI changes this arrangement in a subtle but profound way. When residents or staff rely on machine-generated explanations, those explanations become the system in practice. The original documents retreat into the background, still authoritative in theory, but consulted mostly when something has already gone wrong.

At that point, authority no longer lives primarily in the text or the people. It lives in whatever logic is translating text into answers. Whoever controls that translation is no longer supporting government operations. They are shaping how government actually functions, one response at a time.

The Knowledge Layer: Your New Blueprints

Most leaders assume AI works like a well-mannered intern with superhuman reading speed. You upload a PDF, ask a question, and the machine politely produces an answer that sounds confident enough to repeat in a meeting.

This works acceptably for a single document. It collapses almost immediately when faced with an actual government organization.

When a resident asks, “Can I build a mother-in-law suite?”, the answer is never located in one place. It lives at the intersection of zoning code, housing policy, fire regulations, planning commission precedent, and at least one sentence that begins with “Historically, we have allowed this when…”.

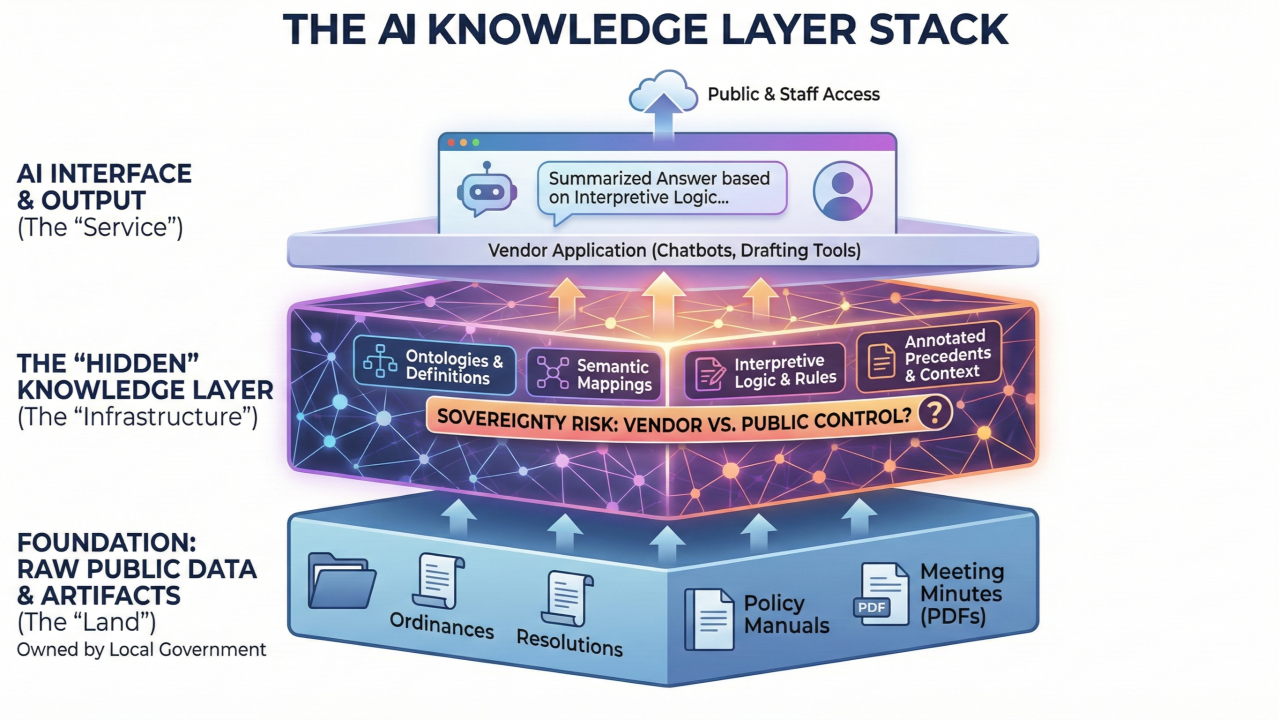

To manage this, AI relies on a hidden layer of structure and logic that most governments never see and rarely discuss. This is what we are calling the Knowledge Layer.

It is not abstract. It consists of very specific assets:

- The Dictionary (Ontology): The quiet decision that “mother-in-law suite,” “accessory dwelling unit,” and “ADU” are the same thing, so the system does not stare blankly at a perfectly reasonable question.

- The Conflict Resolver (Logic): The rule that knows the 2024 Housing Ordinance overrides the 1998 Subdivision Act, even though both remain technically valid and emotionally attached to someone.

- The Memory (Precedent): The marker that treats a particular Planning Commission decision as guidance rather than trivia.

This is where confusion sets in.

Many governments assume these assets are simply part of the AI product they are purchasing. They are not. A clearer distinction helps.

There is the Contractor, and there are the Blueprints.

The contractor is the AI model itself. ChatGPT, Claude, Gemini or a vendor-branded assistant with a reassuring logo. It arrives with impressive tools, impressive speed, and no lasting attachment. You will always rent this.

The blueprints are the structured representation of your government’s understanding. Definitions, priorities, exceptions, and accumulated judgment. These are absolutely ownable.

At the moment, many governments are allowing the contractor to sketch the blueprints as they go, store them in the back of the truck, and drive off with them each evening.

The Consequence: Cognitive Lock-In

When the contractor keeps the blueprints, a new risk emerges. It is not financial lock-in, which procurement teams understand well. It is cognitive lock-in, which tends to appear only after switching vendors becomes emotionally exhausting.

Many governments assume AI behaves like traditional software. If the vendor disappoints, you switch. You export your data, grit your teeth, and endure a painful but survivable transition.

AI does not work this way.

If the intelligence of the system lives inside a proprietary Knowledge Layer, the thing you cannot export is understanding. When the contract ends, you retrieve your documents. You do not retrieve the reasoning that made them usable.

The relationships, interpretations, and institutional memory leave with the vendor. The next provider arrives, surveys the half-finished structure, and asks a reasonable question like, “Why is this rule applied differently on the east side of town?” No one knows. The answer was taught to the last system.

At this point, exit remains legally possible and operationally unthinkable. The vendor stays, regardless of cost or performance, because the alternative involves teaching a new system everything your organization already knows but has never written down.

This is how temporary tools become permanent dependencies, without anyone ever voting on it. This will be how vendor lock-in will capture your government.

The Solution: Knowledge Layer Sovereignty

Local governments already know how to treat infrastructure. Roads, water systems, and records are public assets because the community depends on them functioning predictably.

The Knowledge Layer belongs in the same category.

Knowledge Layer Sovereignty means:

- The county owns the machine-readable representations of its laws and policies.

- The annotations, logic, and relationships that give those systems meaning are portable.

- Interpretive authority is governed explicitly by the public organization, not buried invisibly inside a vendor’s design choices.

In practice, this changes how we write contracts. It means including clauses that require vendors to use open standards for knowledge representation. It means ensuring that if we switch providers, we export not just our raw text, but the structured logic that explains it.

This does not prohibit vendors. It clarifies roles. Contractors can build and maintain systems. They do not get to own the reasoning that governs public life.

The Strategic Reality

No local government would accept a contract where a private company builds a road, owns the land beneath it, and retains the right to remove the pavement if negotiations sour. That would feel absurd, even before the potholes appeared.

Yet this is precisely the arrangement many organizations are drifting into with AI and its associated solution vendors.

The choice is not between adopting AI and avoiding it. That decision has already been made by circumstance, staffing levels, and public expectation. The remaining question is quieter, and far more important.

Will local governments rent intelligence that happens to pass through their organization, or will they own the cognitive infrastructure that supports self-governance?

If sovereignty over the Knowledge Layer is not claimed now, while the technology is still young and negotiable, communities may one day discover that they outsourced more than software. They outsourced the machinery of understanding itself, and then politely asked it questions about zoning setbacks.

Author’s Note

This is the first in a short series of articles exploring this emerging issue.

I am not writing about this from a safe theoretical distance. We are actively working through these questions right now in Van Buren and St. Joseph Counties, where the challenges are very real, very practical, and occasionally arrive without warning.

So while this may read like a thought piece, it is better understood as field notes from a problem we are actively trying to solve, with boots firmly on the ground and at least one eye on the agenda.

And, as always, if you think I have overthought this, misunderstood it, or wandered off into the philosophical weeds, the comment section is open.