When a government staff member uploads a document into ChatGPT, NotebookLM, or the latest AI tool their IT department has cautiously approved, something remarkable appears to happen.

You upload a file. You ask a question. You get an answer.

It feels as though the system has calmly read the document, nodded wisely, and responded.

This is comforting. It is also not what happened.

What actually occurred was far less cinematic and far more consequential. Your document passed through an invisible processing layer that quietly stripped out formatting, broke the text into fragments, and made a series of educated guesses about how those fragments might relate to one another.

This layer, not the chatbot itself, determines whether accuracy survives or quietly evaporates. We call it the Knowledge Layer.

AI is not guessing because it is imaginative or mischievous. AI is guessing because government has not told it, in a form machines can reliably use, what actually matters or how the rules fit together.

At the moment, most governments do not own this layer. They rent it, tucked away inside a vendor’s black box. That is why AI in government still produces answers that sound confident, plausible, and occasionally alarming.

The Myth of “The PDF”

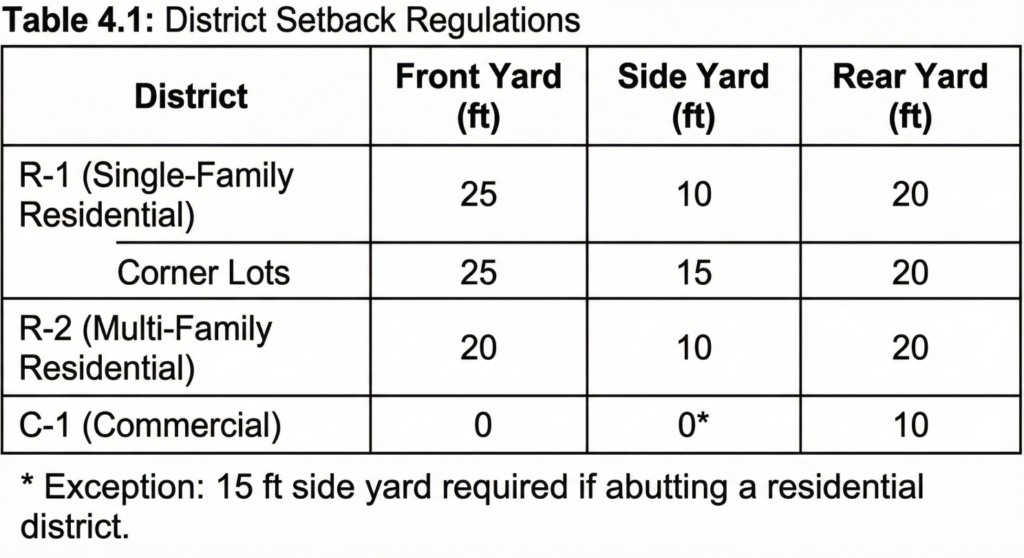

Consider a zoning ordinance, that stalwart companion of planners everywhere.

To a human reader, a setback table is straightforward. Columns labeled “Residential” and “Commercial” behave themselves. Indentation signals an exception. A footnote quietly but decisively modifies a specific row. Your eye knows where to look. Your experience fills in the gaps.

Formatting here is doing real work. Formatting is law.

When that same ordinance is uploaded into an AI system, much of this structure disappears. The Knowledge Layer flattens the document into a stream of text, like a filing cabinet tipped over and swept into a single pile.

- Column headers drift away from their numbers.

- Indentation loses its meaning.

- The visual relationship between a rule and its exception quietly dissolves.

If the AI answers correctly, it is often by good fortune rather than good design. If it answers incorrectly, such as applying a commercial setback to a residential lot, it is not hallucinating.

It is navigating with a damaged map.

The “Lost in the Middle” Problem

Even when the text survives intact, length introduces a different kind of trouble.

AI models tend to remember beginnings and endings better than the middles of long documents. The middle, unfortunately, becomes a statistical no-man’s land. While this is slowly improving, it remains an unsolved problem.

This is awkward for government, where the most important amendment often lives somewhere around Page 145.

If the general rule appears early and the quiet amendment that alters it appears much later, the AI may confidently quote the original language, unaware that it has been superseded by something it technically read but failed to weigh appropriately.

The result is not ignorance. It is misplaced certainty.

Government Is a Web, Not a File

The problem becomes more serious once we step outside a single document.

In many organizations, key ideas live in one place. Upload the deck, summarize the plan, move on.

Government does not behave so neatly. Government operates as a web of relationships:

- The zoning ordinance must conform to the comprehensive plan.

- The comprehensive plan is constrained by state law.

- State law is interpreted through guidance, opinions, and court decisions.

- Somewhere, a memo from 2022 quietly changes how everyone actually applies the rule.

Upload only one document, and you have created a silo. The AI will respond accurately within that silo and be wrong everywhere else. The text may be correct. The answer may be unusable.

Each new ordinance or amendment does not simply add information. It creates new interactions. One rule bends another. One exception overrides a table. One update quietly reverses an assumption everyone thought was settled.

If those relationships live only in institutional memory, AI will flatten them. When that happens, small misunderstandings accumulate until they become significant errors.

Where the Knowledge Layer Actually Lives

The Knowledge Layer is not your documents. It is not your website. It is not your database. It is not the PDFs stored on a shared drive with an optimistic folder structure.

It is the space in between, where information is transformed so machines can reason over it. This includes:

- Parsing visual layouts into text.

- Breaking documents into pieces (chunking).

- Converting language into machine-readable meaning.

- Explicitly linking rules, definitions, exceptions, and overrides.

- Determining what is current, authoritative, and applicable.

AI never reasons directly over your ordinance. It reasons over this layer.

If the layer is weak or incomplete, the system will still provide answers. It will simply fill the gaps by guessing.

The Black Box Risk

Many vendors are attempting to solve this with enterprise search or retrieval-augmented systems. The promise is appealing: upload everything and let the AI find the answers.

A few questions are worth asking quietly, before the contract is signed:

- Who decides how documents are processed?

- Who decides that a 2024 amendment overrides a 2010 ordinance?

- Who decides that a definition in Chapter 1 applies to a rule in Chapter 12?

- Who decides how a table spanning three pages should be interpreted?

At present, the answer is usually an algorithm you do not see and cannot adjust.

If that system separates a definition from the rule it modifies, the answer can flip from correct to incorrect. Because the Knowledge Layer belongs to the vendor, you cannot fix the logic even when you spot the problem… and if you can, the vendor owns the fix.

A Challenge to the Market

This is not just a complaint; it is a customer requirement.

To our vendors, consultants, and the “govtech” innovators knocking on our doors: Quit selling us black boxes.

Government does not need more proprietary voids into which PDFs disappear. It does not need increasingly clever prompts to compensate for broken structure. It does not need institutional knowledge locked inside a single platform.

Here is the opportunity.

The first vendor who builds a simple, government-friendly tool that lets us build, control, and export our own Knowledge Layer will win the market.

We need tools that allow a City Clerk or a Planner to say, clearly and explicitly:

- “This definitions section applies to these three ordinances.”

- “This amendment overrides this specific table.”

- “This state law constrains this local policy.”

And most importantly: We need to own that structure. It allows governments to change tools without changing truth.

If we switch vendors in five years, we shouldn’t have to retrain the new AI from scratch. We should be able to take our Knowledge Layer, our relationships, our hierarchy, our logic, and plug it into the new system.

Why This Matters Now

The Knowledge Layer will be one of the most valuable digital assets government builds in the next decade. It is our institutional memory encoded for machines.

Doing nothing does not pause this process. Staff are already uploading documents. AI systems are already interpreting them. Vendors are already making structural decisions on government’s behalf.

Waiting simply means someone else defines how your rules connect.

We aren’t going to rent our own laws from you. We are going to build the foundation ourselves.

Who is going to help us?