Note: This is the third article in a series about the need for local governments to control how AI accesses and understands public data.

I. The Definition Trap

In local government, the phrase “AI sovereignty” is often misheard as an IT proposal rather than a governance question. It arrives sounding suspiciously like an expensive project disguised as philosophy, the kind that comes with a diagram, a timeline, and a footnote explaining why the budget number has commas.

Discussions about sovereignty reliably collapse into a familiar build-versus-buy dilemma. The assumption quietly takes hold that sovereignty requires hosting complex open-source AI models on county servers, hiring data scientists, or assuming responsibility for infrastructure that evolves more quickly than the annual budget cycle can acknowledge.

If sovereignty required becoming a technology company, the conversation would end here. Local governments are not equipped to develop in-house AI infrastructure, nor should they try. Residents generally expect potholes to be filled before neural networks are tuned.

Fortunately, this framing rests on a category error. It treats intelligence and knowledge as the same thing, confusing the AI model with what we defined in the previous article as the Knowledge Layer.

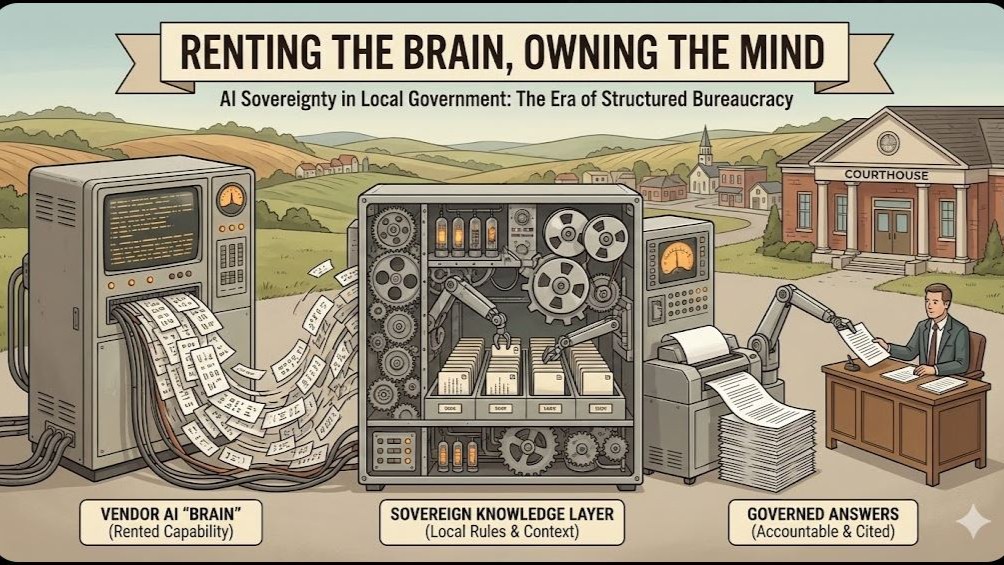

To govern effectively in an age of AI, we do not need to own the machine that performs the reasoning. We need to own the Knowledge Layer: the rules, facts, definitions, and context that give that reasoning meaning. We can rent the brain; the mind, the institutional understanding of how things work here, must remain ours.

The electric grid offers a useful comparison. A county does not operate its own power plant. It purchases electricity as a commodity. Yet the county very much owns the wiring code, the breakers, and the safety rules that determine how that electricity is allowed to flow through a public building. No one confuses buying power with surrendering control, at least not after the first inspection.

AI works the same way at every level of government. It provides capability, not judgment.

It can analyze text, compare rules, and assemble explanations at remarkable speed. What it does not do is understand local intent, political history, or the consequences of being wrong at a public counter at 4:57 p.m.

Those outcomes depend entirely on how meaning is defined, connected, and constrained. That structure does not emerge from the technology itself. It reflects deliberate choices about how a government organizes its laws, records, and policies.

Those choices are governance decisions. And like any governance decision, they carry legal, operational, and political consequences, sometimes all at once.

The ultimate trap is the assumption that the wiring code, the Knowledge Layer, must come bundled with the power supply, the AI. It does not. Once meaning is handed over by default, reclaiming it later tends to involve emergency meetings, retrospective risk assessments, and uncomfortable procurement memos.

II. The False Choice

A second trap appears in the form of a reassuringly simple debate. We are told we can either “adopt AI,” along with all the risks that implies, or decline politely and remain untouched.

This is not how tools enter government.

Interpretation will be automated whether anyone schedules a steering committee or not. It happens when a vendor adds a “Copilot” button to permitting software. It happens when a resident pastes the zoning code into ChatGPT instead of calling the counter. It happens when staff members, drowning in email, use drafting tools to survive the afternoon.

If a county does not explicitly define its Knowledge Layer, the way its laws, definitions, and policy hierarchies actually work in practice, that layer will still exist. It will simply be assembled by default settings and training data pulled from somewhere else, likely somewhere with very different assumptions about setbacks and sewer capacity.

Sidebar: The Risk of “Averaged Meaning”

When a resident asks a generic AI, “Can I build a mother-in-law suite in my backyard?”, the difference between sovereignty and default settings becomes visible.

The Vendor Default (Averaged Meaning): “Yes, usually. Accessory Dwelling Units (ADUs) are generally permitted in residential zones.”

Result: Technically true, legally dangerous. It ignores your specific 2024 moratorium or sewer capacity restriction. Because it sounds authoritative, it is likely to be repeated, by residents, staff, and downstream systems that have never attended a planning commission meeting.

The Sovereign Layer (Local Meaning): “Section 4.1 permits ADUs, but Ordinance 22-05 currently restricts new water hookups in District 3.”

Result: Accurate and actionable. The logic comes from your documents, not the model’s training data.

Absent a county-defined Knowledge Layer, meaning becomes outsourced without anyone signing a contract. There is no systematic way to correct the error, or to ensure the same mistake is not repeated across channels. Faced with inevitable automation, the only responsible administrative choice is to define the terms on which it operates.

Conservative Governance, Not Radical Experimentation

Claiming sovereignty here is not an act of innovation theater. It is an exercise in stabilization.

An AI governed by a sovereign Knowledge Layer does not invent new policy. It does not reinterpret law. It documents the interpretations staff already use and makes them visible, consistent, and reviewable. It ensures that when a machine produces an answer, it relies on your definitions rather than a vendor’s assumptions.

The real choice is between local meaning and averaged meaning. One of these can be governed. The other is merely convenient.

III. What “Ownership” Actually Means: Three Non-Negotiables

At this point, the question is no longer philosophical. It is operational, and it has procurement, legal, and staffing implications. Ownership of the Knowledge Layer sounds abstract until it is reduced to operational requirements. There are three, and they belong in contracts rather than white papers.

1. Portability of Logic (The “Lobotomy” Test)

Most administrators understand data migration pain. Moving from one ERP system to another is unpleasant, but at least names and balances can be exported. AI introduces a subtler risk: cognitive lock-in. It remains invisible until the day you try to exit a contract.

If a vendor builds a system that “understands” your zoning code, but that understanding lives only inside a proprietary black box, you are borrowing your own institutional memory. Terminate the contract and the understanding disappears with it, usually at the worst possible moment.

The Requirement: You must be able to export not only documents, but the logic that connects them. If the rule stating that a 2024 overlay supersedes a 1998 ordinance exists only inside the vendor’s model, you own a PDF, not a policy.

This does not require a complex rules engine that enforces outcomes. It requires documented relationships and constraints that any future system can read without being re-educated from scratch. For example, which ordinance overrides which, which definitions apply in which districts, and which exceptions are still active.

2. Explicit Provenance (The “Show Your Work” Rule)

In administrative law, “the computer said so” has never survived contact with an appeal. If a resident relies on an answer that turns out to be wrong, the responsibility lands squarely with the agency. A sovereign Knowledge Layer therefore demands strict provenance.

The Requirement: Every summary, explanation, or draft must trace back to a specific, immutable source document owned by the agency.

Outputs, especially those involving policy, ordinances, or law, read less like pronouncements and more like annotated memos: “Based on Section 4.2 of the Zoning Ordinance [citation], the requirement is X.” Authority remains with the adopted record, not the generated text. The AI retrieves and assembles; it does not declare. The Knowledge Layer serves as the authoritative operational reference for retrieval, but it never displaces the adopted record as the final legal source of truth.

3. Vendor Agnosticism (The Universal Adapter)

The AI landscape is volatile. Today’s leading model will eventually join yesterday’s document management platform in the museum of earnest procurements. If your Knowledge Layer can only be read by one vendor’s system, sovereignty has quietly evaporated.

The Requirement: Definitions, tags, and cross-references must live in open, standard formats. Markdown, JSON, and conventional geospatial files may not be glamorous, but they are loyal.

The goal is to swap the brain without performing surgery on the mind. AI models and vendor systems should be treated like toner cartridges: essential, replaceable, and governed by procurement rules rather than strategic dependency. This requirement belongs in procurement language, not just architecture diagrams.

IV. The Boundary: Explanation vs. Adjudication

Owning the logic requires drawing a clear boundary around what the system is allowed to do.

Municipal attorneys are correct to worry about systems drifting into adjudication. That concern is resolved by drawing a firm line between explanation and decision.

An AI governed by a sovereign Knowledge Layer does not grant permits. It does not issue rulings. It does not replace notice, hearings, or appeals. It drafts and retrieves.

Think of it as a junior planner. It pulls relevant code sections, summarizes requirements, and flags conflicts. It produces a staff report. The Zoning Administrator still signs the permit.

This distinction protects legal defensibility. Outputs are informational drafts based on cited records. Authority and accountability remain with sworn officials, exactly where the law expects them to be. Nothing in this model alters existing standards for legal advice, due process, or administrative review. The system assists with information access; it does not replace professional judgment or legal counsel.

V. Human-in-the-Loop Is a Feature, Not a Safety Net

Skeptics often argue that human review eventually collapses under workload pressure. Automation bias is real. When systems speak confidently, people listen. The question is not whether humans remain involved, but what role they are pushed into.

That critique applies to black boxes.

When an AI system is required to draw exclusively from a county-defined Knowledge Layer, its outputs become verifiable by design. Provenance is mandatory; citations are not optional.

An unconstrained system might say, “The setback is 15 feet.”

A constrained system instead says, “The setback is 15 feet, based on Section 4.2 of the Zoning Ordinance and modified by the 2023 Floodplain Overlay.”

In the second case, the answer can be verified, explained, and defended.

The staff member’s role shifts from data retrieval to verification and judgment. The system handles the structured certainty and codified discretion already embedded in policy. The human applies judgment where discretion has not, or cannot, be formally defined. Accountability remains human.

VI. The Path Forward

If sovereignty reduces lock-in and mitigates automation bias, the remaining barrier is not technological. It is organizational.

The challenge is no longer access to capability, but clarity about how a government’s own rules, records, and interpretations fit together.

Building a Knowledge Layer means structuring the ordinances, policies, and records you already have so that meaning can be exported intact. AI changes how explanations are delivered, not who holds authority. The Knowledge Layer exists to preserve that distinction under automation.

It is a governance task, not an engineering one. In principle, that control could be provided by a vendor, if that vendor allowed full transparency, portability, and ownership at a price accessible to small local governments.

In practice, such offerings do not yet exist.

The remaining question is practical. What does that minimal assembly look like in a county IT department with three staff members and a heroic helpdesk ticketing system?

That, mercifully, is the subject of the next article.

Author’s Note

Readers with technical backgrounds will recognize many of the ideas in this article under different names. In the technology world, similar concepts are discussed as knowledge graphs, semantic layers, retrieval-augmented generation, policy-as-data, or model grounding. None of this is new in principle.

What is new is the context.

Local government operates under legal, political, and administrative constraints that most commercial systems are not designed to respect. The language used in this series intentionally avoids technical jargon, not to oversimplify the ideas, but to make the governance implications visible to the people who ultimately bear responsibility for them.

This article reframes familiar technical concepts as administrative obligations: ownership, provenance, portability, and authority. Those are not engineering preferences. They are conditions of lawful and defensible governance.

The next article moves from theory to practice. It does not argue that counties should become technology companies. It examines what minimal structure is required and achievable, given current market limitations, to retain control of the Knowledge Layer while continuing to rent AI capability from vendors.