How Van Buren and St. Joseph Counties are building a sovereign AI architecture for local government.

How Van Buren and St. Joseph Counties are building a sovereign AI architecture for local government.

This document describes where we are headed. It is a roadmap for something under construction rather than a ribbon-cutting speech for something finished.

Over the past year, most conversations about AI in local government have taken place in the familiar territory of prohibition.

- No personal accounts.

- No sensitive data.

- No unapproved tools.

These policies are necessary. Anyone responsible for public records, legal exposure, or IT security will confirm that with admirable enthusiasm.

Yet policy has a structural disadvantage when competing with utility. If the official tool provided by the county isn’t as good as the AI app on an employee’s phone, the phone tends to win with quiet consistency.

The result is not merely a compliance problem. It produces something closer to a records management black hole. Interactions that may constitute public records occur on private servers, through personal devices, outside the field of view of FOIA coordinators, records managers, and security staff. From an institutional perspective, the conversation simply vanishes.

After watching this dynamic unfold, we reached a fairly simple conclusion. The durable solution is not stricter policy. The durable solution is better infrastructure. That infrastructure is what we are building. We refer to it as a Sovereign AI Stack.

What “Sovereign” Actually Means

Sovereign, in this context, does not mean Van Buren or St. Joseph County is attempting to train its own frontier AI models. County government has many admirable qualities, but spare supercomputers are not currently among them.

Sovereign means something more practical. The counties control the interface, knowledge infrastructure, and governance layer through which staff interact with AI. The underlying model could be Claude, Gemini, a GPT-class system, or something that has not yet appeared on a vendor roadmap. The specific engine matters less than the layer between our staff and that engine. When the counties control that layer, several things become possible.

- Auditable logs. Staff interactions can be captured in ways that support records retention and FOIA compliance. The system is designed with accountability in mind rather than retrofitted later.

- Data control. Institutional knowledge lives in repositories we manage, indexed and structured independently of any vendor’s terms of service or data retention policy.

- Model agility. AI capabilities and pricing will change dramatically over the next several years. Controlling the interface allows us to change models without retraining the entire workforce every time the market shifts.

- Cost control. API-based usage lets us scale access across departments while paying for actual usage rather than theoretical access through per-seat licenses.

In short, sovereignty means the counties retain authority over how AI is used, recorded, and integrated into daily operations.

The Knowledge Layer Is the Real Differentiator

The chatbot interface itself is the least interesting part of the architecture. It is essentially a doorway. What matters is the building behind it.

The internal AI portal connects to a curated repository of county-specific knowledge. Ordinances, meeting minutes, departmental procedures, GIS datasets, planning reports, and the many documents that quietly determine how government actually functions.

When staff use the portal, they are not simply querying a generic model trained on the internet. They are interacting with a system grounded in the regulatory, geographic, and operational context of our counties.

What makes the system grow more useful over time is the feedback loop created by daily work. Documents uploaded by staff, vetted reports, departmental guidance, and even the logged interactions themselves gradually become part of the institutional knowledge layer. Each addition strengthens the system’s understanding of how the counties operate in practice rather than merely in theory.

In other words, the system improves as the organization uses it. A planning commission report today becomes contextual knowledge for a zoning question six months from now. A frequently referenced procurement document quietly evolves into institutional memory.

The effect is cumulative. The system begins to reflect the operational intelligence of the organization itself.

This architecture also allows the interface to adapt to the reality that county government is not a single profession. A planner, a GIS analyst, a sheriff’s office administrator, and a finance officer all interact with different regulations, datasets, and documents. Rather than presenting everyone with the same generic assistant, the portal can be configured around departmental or job-function contexts. A planner might see zoning ordinances, parcel data, and planning commission minutes prioritized in their workspace. Finance staff may interact primarily with procurement policies, budgets, and audit guidance. GIS users may have spatial datasets and mapping tools integrated directly into their interface.

The underlying system remains the same. The doorway simply opens into different rooms depending on who is walking through it.

That distinction matters because the real value of institutional AI does not come from the model itself. It comes from the knowledge, documents, and operational experience that only the organization possesses. No vendor can package that knowledge in advance. It emerges gradually from the accumulated work of the people inside the institution, and in local government, that work tends to be both extensive and occasionally hidden in filing cabinets that predate the internet.

Cite Your Work

There is one more design decision embedded in the interface that deserves mention, because it addresses a question every responsible government official should be asking about AI: how do you know it didn’t fabricate that?

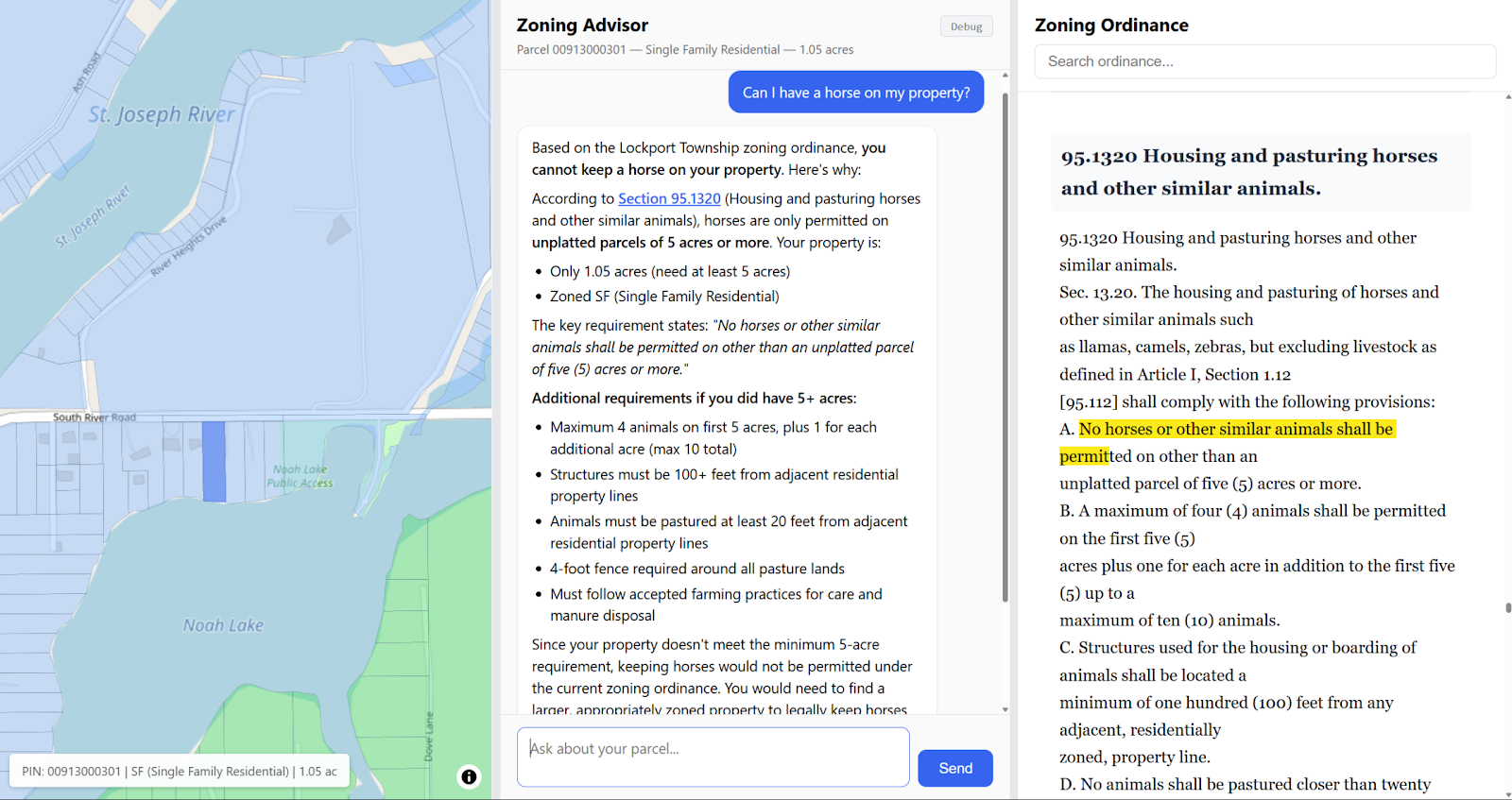

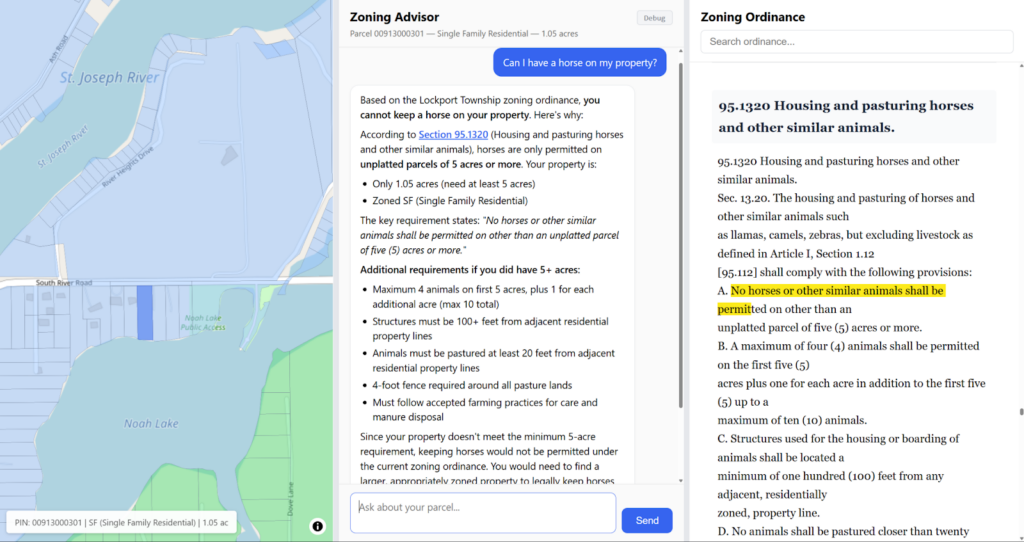

Our portal is designed as a multi-panel system. The AI response appears in one panel. The source documents it drew from appear in adjacent panels, with the relevant passages highlighted and cited. Staff do not have to take the system’s word for anything. They can see exactly which section of which ordinance, which paragraph of which meeting minute, which line of which departmental procedure informed the response.

This is not a cosmetic feature. It does three things simultaneously. It closes the hallucination problem for practical government use, an AI response grounded in a visible, highlighted source document is a fundamentally different instrument than one generated from the open internet. It makes AI-assisted work auditable and defensible under FOIA, because the evidentiary trail is built into the interaction itself. And over time, it builds the kind of institutional trust that determines whether staff actually use the system or quietly revert to the phone in their pocket.

Transparency at the response level is what makes the knowledge layer meaningful in practice rather than merely in theory.

A Tiered Architecture

Not every task carries the same risk profile, and not every user needs the same level of capability. The architecture reflects that reality.

Tier 1: The General Staff Portal. The primary interface for most employees. It supports everyday drafting, research, policy lookup, and analysis within an auditable environment. The goal is straightforward: the official tool should also be the easiest tool to use. When that condition is met, compliance tends to improve naturally.

Tier 2: Power User Access. Advanced model access for members of the AI Task Force and technical staff. Participation requires training and involvement in the counties’ digital governance framework. Experimentation happens here, but it happens in the open and with accountability.

Tier 3: Secure Routing for Sensitive Workloads. Local governments handle data that cannot move freely through commercial AI infrastructure, CJIS data, HIPAA-regulated information, investigative records, and similar materials. Our long-term design includes a routing layer that can direct sensitive workloads to locally controlled models or protected environments. In the near term, staff move these workloads into restricted systems manually. One might call this the difference between deliberate experimentation and avoidable recklessness.

Beyond the Chatbot: Agentic Capabilities on the Horizon

A conversational AI portal is the foundation. It is not the ceiling.

The architecture we are building is designed from the outset to support agentic capabilities, systems that do not merely answer questions but take action. Drafting a FOIA response and routing it through the appropriate review chain. Flagging a parcel change in GIS and triggering a zoning notification workflow. Generating permit or licensing documents from structured inputs without requiring staff to start from a blank page.

We are not deploying these capabilities today. The deliberate sequencing is intentional. Agentic systems operating inside government workflows carry a different category of risk than a staff member asking a chatbot to summarize a regulation. When AI acts rather than advises, the governance layer has to be mature enough to catch errors before they propagate through a public process.

Our position is that the knowledge infrastructure and audit architecture we are building now is the prerequisite for agentic work done responsibly. The sovereign stack is not just a better chatbot. It is the foundation on which automated government workflows can eventually run, accountable, auditable, and under institutional control rather than delegated to a vendor’s automation platform.

That work is on the roadmap. We are building toward it carefully.

Why Not Simply Buy an Enterprise AI Suite?

Several major technology vendors now offer AI products designed for government. We have evaluated them seriously. Three structural challenges appear repeatedly.

Vendor lock-in at the knowledge layer. Enterprise suites often bundle the interface, the model, and the knowledge infrastructure into a single platform. Institutional knowledge then becomes intertwined with a vendor’s system, and switching costs increase quietly each year. The sovereignty problem does not disappear. It merely relocates.

Cost structures that do not match local government. Per-seat licensing assumes uniform and frequent usage. County governments operate differently. A planner, GIS analyst, or policy staff member may use AI daily. Other staff may use it occasionally. API-based models allow the counties to pay for actual usage rather than universal access.

Limited institutional customization. Generic assistants rarely understand the procedural rhythms of a rural Michigan county government. They are unfamiliar with local ordinance structures, departmental workflows, and the various ways real-world governance deviates from tidy software assumptions. A vendor product can be configured. Institutional knowledge, however, has to be built.

Where We Are

Our work is a little further along than the word “roadmap” might suggest.

We have already built functional AI chatbots and integrated them with our GIS infrastructure, an architecture that grounds AI responses in the actual spatial and parcel data of our counties rather than generic geographic knowledge. We are actively ingesting zoning ordinances and other municipal documents into the knowledge layer, carefully and with deliberate attention to document quality. Early results have been encouraging enough that we are now working to design the system for scale: a structured, adoptable internal model that other departments, and eventually other counties, could deploy without rebuilding from scratch.

Our multi-panel AI interface shown here as part of our AI Zoning Assistant. The AI chatbot (center) is synchronized and grounded by the GIS data (left) and zoning ordinance and supporting documents (right).

That last part is the honest constraint. Small government does not have the luxury of dedicated engineering sprints. Progress is real but paced by the reality that the staff doing this work are also running regular county operations. The architecture is sound. The question now is velocity.

The work is happening through DICE, the Digital Innovation Collaborative Exchange, a joint initiative operating across both counties focused on GIS, AI, automation, and digital governance. The design philosophy is intentionally modest: keep the structure lean, iterate frequently, and document decisions in the open. Local government technology projects rarely benefit from excessive mystery.

A Note to Peer Counties

The problems this architecture attempts to address are not unique to Van Buren and St. Joseph Counties. Shadow AI usage appears in nearly every organization where policy has outpaced infrastructure. The cost mismatch between enterprise licensing and API-based access affects most small and mid-sized governments.

We started where most counties start: with policies and good intentions. The difference is that we concluded prohibition alone doesn’t close the loop. Someone still has to build the alternative.

We are documenting our design decisions and sharing what we learn as the system evolves. If your county is working through similar questions, we would welcome the conversation. The AI era is arriving faster than most procurement cycles, and rural county governments rarely have the luxury of solving large technological shifts in isolation.

So what do you think. Is this the right direction for local government?

DICE — Digital Innovation Collaborative Exchange. A joint initiative of Van Buren County and St. Joseph County, Michigan, focused on GIS, AI, automation, and digital governance.